– Or: Navigate a Website on a Computer Screen in a Website on a Computer Screen –

Jerome Etienne‘s post on HTML Elements in a WebGL surrounding really caught my interest. What I wanted to do was slightly different, though: I wanted a fully accessible website displayed on the screen of a computer 3D model (creating a virtual 3D office in a browser with an interactive computer, phone, stereo and such is an old dream of mine).

Jerome’s post was a good start, and he created tQuery plugins to do it. After reading the source and understanding the concept, it was a pretty easy and straightforward process, except for two issues I ran into. And here’s the result: browse this website in a 3D environment (requires Chrome and features audio, so grab some headphones and turn up the volume).

The concept

If you already read Jerome’s post, you can skip this section. If not, here’s the idea: First, you cannot really merge HTML elements into your scene. The only way to do this would be to make a “screenshot” of the rendered element, and use the resulting image as a texture for a mesh. But then you’d have to do a painful amount of work to make the element interactive – you’d have to delegate all user input correctly to the (hidden) element, let the browser render it, grab a new screenshot and update the texture. I doubt one could get acceptable performance through this technique (statement yet to be proved – in for a challenge, anyone?).

Instead, one stacks two render layers on top of each other: One layer is the WebGL canvas. The other layer is a plain HTML element, containing the element that should be part of the scene. Camera movement in WebGL is done as usual, and for the HTML element it is done using CSS 3D transforms. Yep, CSS 3D transforms can do all you need to achieve this. The tricky thing now is to sync up camera position/rotation changes for both layers.

THREE.js to the rescue: there is a CSS3DRender that works just like the WebGLRenderer, leveraging CSS 3D transforms. So the THREE.js setup looks like this:

- WebGLRenderer

- WebGLScene

- WebGLObjects

- CSSRenderer

- CSSScene

- CSSObjects

- globalCamera

In the update loop, you call both renderer’s render() method and pass them their respective scene – and the global camera.

WebGLRenderer.render(WebGLScene, globalCamera); CSSRenderer.render(CSSScene, globalCamera);

Positioning the HTML element

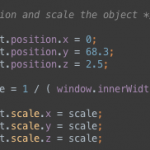

I found that one tricky to do – I took a slightly different approach here than Jerome. Figuring out where to position the element so that it looks like it is part of the computer model screen was tedious, but doable. Another thing was finding out how to scale the element correctly: The size of the rendered 3D model depends on the size of the WebGL canvas and the camera’s FOV. In the end, I played around with some numbers and figured out that I could compute the needed scale like this:

var scale = 1 / ( window.innerWidth / 61.7 );

However, I have no idea why.

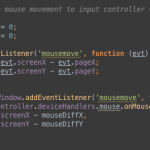

Getting events from the iframe

The HTML element I display is an iframe. And events belong to windows – so when you move the mouse over an iframe, the parent no longer receives mousemove events. That is a little annoying if you listen to those to rotate the camera. So, you need to pass the events you’re interested in to the parent window yourself. There’s two options here: First, using postMessage. Just listen to the event in the iframe, build your payload object and post it to the parent window (you can’t post the original event, as it can’t be serialized by the structured clone algorithm). The other option – listen to the iframe’s events directly from the parent window. I chose option #2, as both windows share the same origin and it’s faster. Obtaining the correct mouse position has another little pitfall: to get the “real” mouse coordinates, as viewed from outside of the iframe, one needs to check the screen* properties, which report the physical mouse position on the screen. All other properties hold values relative to the iframe’s original dimensions – but in our case the iframe is scaled and distorted. To match screen coordinates to window coordinates, just add another listener to the parent window and store the diffs between screen and window values

Open issues

Blending. In my version, the HTML layer is on top of the WebGL layer, so that the user can directly interact with the iframe contents. That means that the element will always be rendered on top – also if it should be rendered “behind” an object that lives on the WebGL layer. In my case this was no problem, as I limit player/camera movement so that the issue just doesn’t arise. Jerome has covered this more in-depth – and has a solution at hand.

Summary

I really like the results of the experiments, as it’s a little “inception-esque”. You can freely navigate in the iframe and the browser just renders it for you. It’s a real browser in a 3D WebGL scene. That’s awesome! However, it just works as long as if you don’t leave the same-origin area within the iframe, and if you’d want to embed an iframe into a larger scene, things get a little more complicated as you’d have to solve the blending issue and make sure you don’t waste performance on the CSS renderer if the element is not visible, or just barely visible (something like displaying a screenshot texture as a LOD solution).

Links / Credits

- Surf in Style: The experiment itself

- The experiment’s source code on GitHub

- The original concept is from Jerome’s post

- The audio track is Understep by Flex Blur

- The model in the scene is by jonnyl3D

Ha, even the comment form works! Made trippier by the fact that every time you hit one of WASD while typing the comment, the camera dances around.

What would happen if you got rid of the target=”_blank” on the link to the experiment? Could you run the experiment inside itself?

Yes, it will work – but it will slightly screw up camera rotation. I removed the target=_blank from the link, so you can try out _real_ recursion :)

Oh shit! You can run the webgl scene inside the webgl scene! Not sure how far you can go down until your computer explodes.

I still had reasonable performance with three recursions :)

Holy shitballs, that’s amazing. Though the music becomes pretty obnoxious three levels in!

Wow, it really DOES work